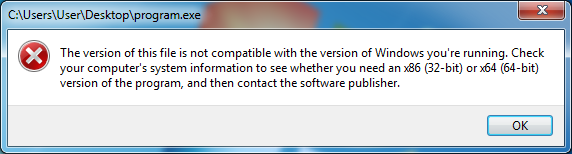

One of my earliest memories about computers goes back to my early teenager days. About when I grew out of floppy disks and was introduced to gigabytes of flash drives, I read a book about C programming with lots of code snippets. I wanted to try running one, and my naive self thought saving the code into an .exe file would do the job.

It was not the case, of course. But everyone has to start somewhere, right?

The early days of a tinkerer

Even before learning how to code, I did things that made non-tech-savvy people around me think I am a hacker (which I was not).

Trying out Linux

Like many people, I was first introduced to Linux with Ubuntu. Wubi, the "Windows-based Ubuntu Installer", gave me peace of mind during then, as I did not have to deal with partitioning (which was difficult for me at that time). I ran lots of commands and I broke the system many times, but I went back to it and learned what (not) to do to maintain a system.

Later on, I achieved dual boot with a proper standalone installation. I also started what others call "distro-hopping", where I was introduced with Fedora, OpenSUSE, and Ubuntu with other desktop environments. Most of the time, though, I stuck with Fedora thanks to its bluish branding colour its timely updates, its relative ease of use, and staying close to upstream projects' offerings (instead of creating customised solutions like YaST).

Modding smartphones

My first smartphone almost became an iPhone 4s, but I am glad that an Android device, a Samsung Galaxy S II in particular, took that place instead. Wishing to explore what it is capable of, I unlocked root access and tried overclocking the device; originally clocked at 1.2 GHz, I increased the CPU clock to 1.4 GHz and achieved stable performance, with 1.5 GHz crashing the device a little bit after the application. I also tried adjusting voltages and overclocking the GPU, which I increased from 267 MHz to 400 MHz. Gaming in this state introduced a lot of heat, but it was not really my concern at that time.

I then looked into the so-called custom ROMs to try what the stock counterpart did not offer. Some notable ones I can remember are CyanogenMod (long live LineageOS), Resurrection Remix, and AOKP, although I usually daily drove the first one among the list. I also tried some custom kernels, where I learned and applied some CPU scaling governors and I/O schedulers (aside from ondemand and cfq, respectively) that were not present in the stock version. Before accidentally losing the device, I was able to get it to run Android 4.4 KitKat, whereas the official support ended with Android 4.1 Jelly Bean.

The modifications I have made on the poor Android device did not really have a significant performance improvement that I could feel, but pieces of knowledge I obtained during then eventually became a solid foundation for my ability to troubleshoot Linux systems' problems in general.

The time I learned programming

At some point in my early-to-mid teenage years, I attended a class at a local tech training institute to learn programming, where I finally figured out how to write and run code in C and C++. The latter has a special place in my heart; it became my go-to programming language for trying out all sorts of things, and as the standards evolved, I learned and used more features from the standard library that made the code look nothing like the C one which, by avoiding manual memory management for example, made the programme somewhat safer.

Some time after my introduction to programming, I also learned a bit of Java but I guess I never really liked . Soon, my computer would always have Visual Studio and Eclipse IDEs ready (until I stared to primarily use Linux, that is).camelCase

With these experiences, I believe I learned computational thinking early on in my life. It helped me learn new programming languages without much trouble, and come up with solutions intuitively.

The academic journey

The time for the university eventually came, and I started pursuing a bachelor's degree in computer science. On one lecture about introduction to C++, I remember surprising a professor by writing a code that calculated pitches and played Twinkle, Twinkle, Little Star with the use of Beep function in Win32 API.

Speaking of pitches and music...

Learning music and combining both interests

I started learning how to play the guitar some time before the university, which I did by doing what I like: playing a video game that is Rocksmith 2014.

While I did not become good enough to either call myself a professional or think about a career in performing arts, I was considered better than average by those around me. I got along with peers that also played music, and had some chances to perform in front of about a thousand students, together with other famous bands of the country. They were unforgettable moments, and quite fun.

With interests in both computers and music, I naturally wanted to write programmes about the latter. Having learned how to play improvised guitar solos before, I decided to create one that would play other instruments for me.

My idea soon became a part of my undergraduate thesis. I had a somewhat moderate understanding of music theory from piano lessons I have taken in the past, which helped me implement desired features (like visualisation of scales and converting notes to frequencies). My own implementation of the fast Fourier transform algorithm was used to detect pitch but, given how difficult algorithmic composition was to me, I had to settle with basic rhythm patterns for other instruments. I may revisit this idea in the future, and turn it into something I can personally enjoy myself.

The web development

Parts of the curriculum included web development in various aspects. Starting with basic HTML and CSS, I was soon able to make some static pages while also using something like Bootstrap. Then, with PHP, I later saw myself doing a full-stack web development and database management.

What I learned about web development up until then is also what I happened to utilise during my internship, at the very place that taught me, where I worked on a new web application. During the system's development that took place across two academic terms (out of three per year), I had a chance to be a part of what keeps the institute running and integrate with its systems.

Covid, call of duty, and vehicle diagnosis

The Covid-19 pandemic started to seriously affect my community not so long after the graduation. While many of my dear friends have already been working and soon transitioned to remote work environments, I kind of lost momentum at that point.

It is not to say that the pandemic was at fault; it was far from the reason. Because I have been studying abroad, I delayed conscription to finish my academic journey uninterrupted. It was now time to serve the country, and as a result, I joined the Navy for a while. On and off the ship, I assisted petty officers in performing their administrative tasks.

When I was back home, I helped my father with his work, who was running a vehicle repair shop. Starting with basic maintenance, I soon found myself single-handedly replacing parts like spark plugs and HVAC compressors.

However, what he said I helped him with the most was diagnosis of vehicles, especially for imported ones where resources in his native language may be scarce. On top of that, I learned to use OBD-II compliant diagnostic tools, where I sometimes even performed variant coding (while trying to figure out German parameter names from Mercedes-Benz vehicles).

I already had some years of driving experience before, but with this, I believe I learned to be a more alert and responsible driver. Furthermore, I now had a newfound interest in repairing things, like computers.

Repairability and Linux, by the way

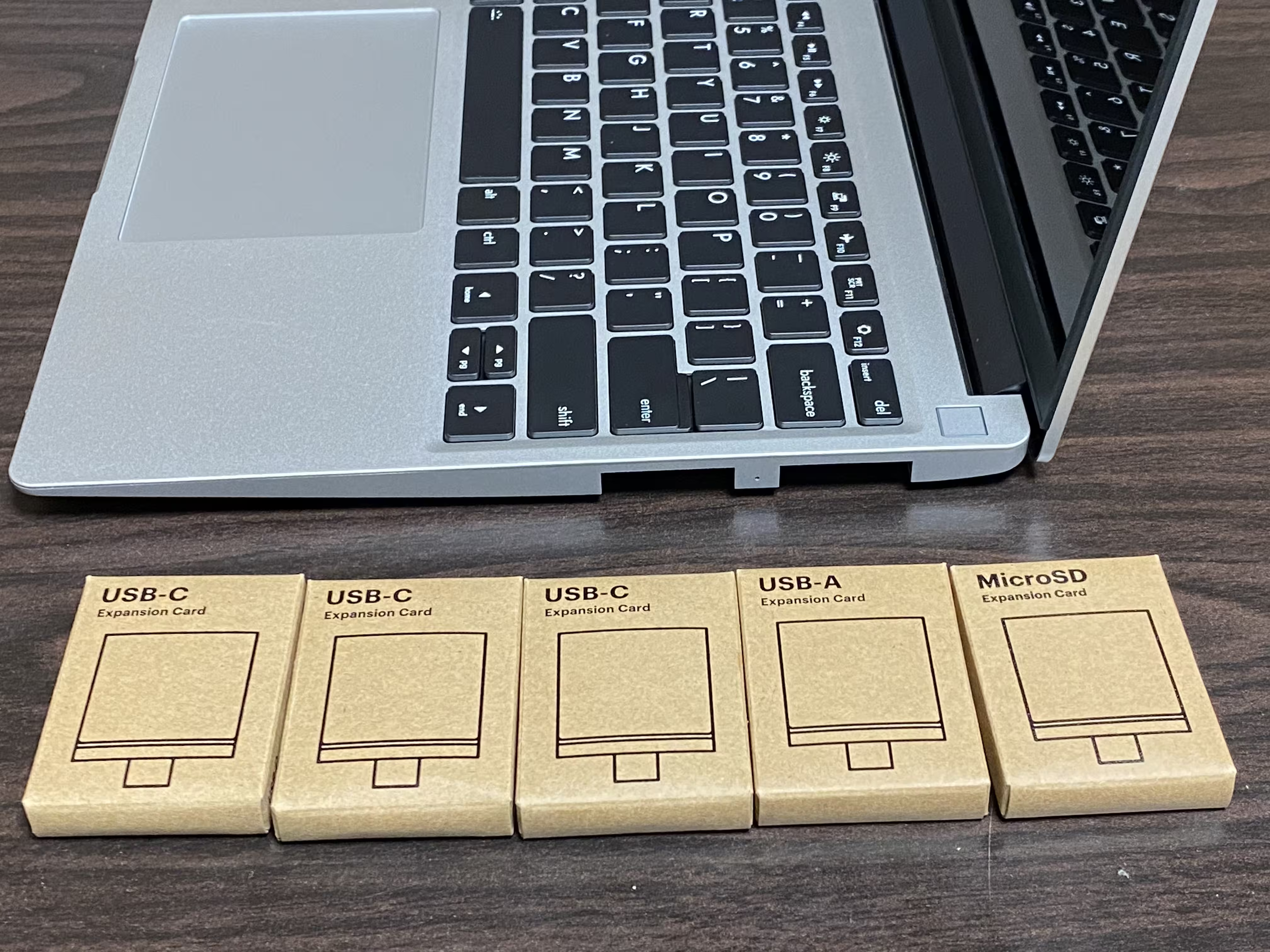

Speaking of repairing computers, it was around that time when I was introduced to Framework Computer, with their then-recently-released Framework Laptop with 12th-generation Intel Core processors. There was no official way to ship one to my country, but if you know, you know.

I have previously been using a Surface Pro 3, which was no longer portable with its failed battery. Being unable (or very difficult) to repair the device that was out of warranty, the repair-friendly laptop came into my attention just when I thought I needed a new one.

I grabbed a DIY model and separately purchased its memory and non-volatile storage from local online stores. After putting them altogether, I was satisfied with the result.

The next thing to do was to install an operating system. Up until this point, while I kept using Linux in some capacity, the primary OS was usually Windows; it was about to change here, as I decided to prepare and run Arch Linux.

Two days of manual installation and configuration taught me a great amount of knowledge in system management. To do something, like setting up disk encryption while still achieving convenience, I constantly referred to ArchWiki and found relevant pieces of information, such as Secure Boot and systemd-cryptenroll.

Unfortunately for me, the display started acting weird only about a week after using the laptop. However, fortunately, the Framework Support team provided me a new display free of charge, which I replaced with myself.

Back to web development with Samsung

Some time later, I was suggested to apply for a job training program called Samsung Software Academy for Youth (SSAFY, now Samsung Software·AI Academy for Youth). I thought it could be an opportunity to use and refine my skills, stay relevant, and experience collaboration. A lengthy process of application and interview later, I was thankfully admitted to the program.

The one-year course was split into two major parts: the first six months for lessons, and the rest for work-like group projects. The first half was spent learning Java, problem-solving (by implementing algorithms and data structures), and web development (with Vue.js and Spring). At the end of the term, I also worked on a project with a partner, making use of what I have learned up until that point.

The second half was where I teamed up with several people, ideally making teams of 6. There were three projects that lasted about six weeks each, where the first two were limited in scope but the last one was not. It was great to be able to get hands-on experiences in not only the commonly-used skills (such as React and FastAPI), but also collaboration (as well as tools for that, like Jira). I am also especially grateful for those nice people that helped me go through a not-so-easy year.

Introduction to DevOps

At this point, I considered my skills to be either niche or not so appealing. While I used quite a bit of C++ (and even Rust, which I started writing some time back), people mostly used it for embedded software, which was far from graphical desktop applications I have been writing with the programming language. Writing web applications was also something I knew how to do, but with just about everyone else (that I know of, anyway) jumping into either front-end or back-end development, I felt standing out among them would be a challenging task.

I was then suggested to learn about DevOps. Automating management of infrastructure for the web soon became the thing I decided to go after, despite countless new things I thought I would have to learn going forward.

The declarative thinking

While self-hosting PeerTube, I came across a Linux distribution called NixOS. It was very different from any other operating systems I have tried, and it took a while to understand how it even works.

When I did, however, I could not go back. Instead of instructing the machine with commands to reach my desired state, I would now just be stating which state the device should be in. Together with the ability to save the configuration in a Git repository, it was a great way to set up the system before even performing the installation, and it was good to know that restoring the state would not take as long in case I have to start afresh.

My application of declarative programming soon extended to infrastructure management with Terraform and OpenTofu. As I expanded my knowledge to Kubernetes, the programming style was applied even to its environment itself, which was possible thanks to Talos Linux.

My personal values

There are some personal values I would like to adhere to while working on personal projects.

The usage of open software

Arguments (that I could see) in favour of open-source software typically revolve around improved security. While public scrutiny may help, what I can really benefit from one is the ability to read its code when the documentation is not clear enough.

I have two examples where it actually helped me. First, while packaging programmes with Nix, I had to read the code to confirm what I could not find from anywhere else.

The solution was to wrap the program with

makeWrapper. By looking at an example that has been presented to me, it seemed like I can set environment variables with it.# adds `FOOBAR=baz` to `$out/bin/foo`’s environment makeWrapper $out/bin/foo $wrapperfile --set FOOBAR bazBut what about the part where I want to unset one? I guess

--unsetmakes sense, but where is any documentation to confirm this?It took a while to find what I have been looking for, which was hidden in the code:

Another example is where I performed Tailscale integration with K3s; I had to pass an option to the k3s binary, but its accepted values were not documented in detail.

I could not find the accepted value for

--vpn-authdocumented anywhere, so I read the code and verified that:

- K3s internally runs

tailscale up, eliminating the need to specifyservices.tailscale.authKeyFileextraArgscan be specified to pass additional parameters totailscale up, which is necessary to advertise tagsextraArgsare expected to be space-separated values

As such, I believe it is important to be able to independently verify how something is done, which tends to be more challenging with closed-source software.

Getting things right

I am not perfect, and despite doing my best, chances are that I will screw up at some points. However, if I learn how something can be done better, I will likely do something about it, even if I have to face a steep learning curve as a consequence.

Let me take NixOS as an example. What I could have chosen instead was Ansible, a (relatively) well-known system configuration management suite. However, despite the Red Hat-owned software's relative ease of use, I decided to go with NixOS for a few reasons:

- I would otherwise need to take extra steps to ensure that my Ansible playbooks are idempotent (and/or convergent) and atomic.

- Configuration drift is prevented with immutability, where

/nix/storeis mounted as read-only.- Possible manual modifications can be discarded by mounting

/astmpfs.

- Possible manual modifications can be discarded by mounting

- Several versions of same software can simultaneously be installed and used.

- Rolling back to previous states is trivial without setting up snapshots.

Whenever possible, to the best of my knowledge, I aim to follow best practices and apply what I think is a more correct solution.

Tools do not matter

I know, I have been talking about them all the time earlier. However, I really mean it.

The most important thing to me is not much about what should be used, but instead about reaching the goal. While the previous two points I have made are also pretty significant, I believe they should not be something that would block progress.

When I tried to configure and run Kubernetes the NixOS way, I realised I was trying so hard to do something that was just not designed to work well. It was when I provisioned a cluster using OpenTofu that made the process a lot easier.

I can learn new tools, which I seem to do pretty okay (considering that I learned the Nix language). When colleagues are involved, it would be important for me to listen to what everyone is comfortable with.

The present

My focus is on Kubernetes, a famous container orchestration system, at this time. I am currently working on migrating my self-hosted services to a cluster I have previously set up.

On top of the above, I am also preparing for a relocation. I may not be able to focus on my next project as a result, but I wish I can manage to do both of them well nonetheless.

About this blog

I appreciate taking your time to read what was probably a long set of paragraphs (assuming that you did)! This little space was made when I was suggested to make a blog about technical stuff, which I am still doing until now.

Here, I mostly document my journey to achieving my goal and/or solving problems. It is not meant to be about teaching you or providing you with a useful piece of knowledge; I believe I have to learn more (about what I have written) to do so properly. However, as things that I do sometimes differ from what others from the internet seem to be doing, I would still be grateful if anyone coming across this place finds a solution to their unique problem.

Since I started writing when I was introduced to DevOps, the majority of my posts at this time are related to what I have learned about it so far. However, anything that I find interesting can end up here, like how I used Zrythm to make some music.

All words written here, except where quoted, are of my own. Please understand that, while I do my best to get facts right through researches, I may still make mistakes, especially given that I usually write about my learning experiences.

Contacts and external links

My email address, as well as other links to my profiles, can be found in my Keyoxide profile. For private communications, my OpenPGP public key can be downloaded from my Web Key Directory server; the fingerprint is as follows:

270C B11B 1189 E79A 17DC B783 1BDA FDC5 D60E 735C

The following are links to my profiles: